How the “Poisson Preds” Page Works in Football Hacking (Streamlit + Poisson + Dixon–Coles)

A practical, beginner-friendly walkthrough of Poisson scoreline matrices, Dixon–Coles, and fair odds in Streamlit.

If you’ve ever wanted a clean, explainable baseline for football predictions—something you can implement in a few functions, test quickly, and ship into a web app—then the Poisson model is still one of the best places to start.

In the Football Hacking web app, the Poisson Predictions page (Poisson Preds) takes match-level data from your MongoDB pipeline, estimates expected goals for each team, builds a scoreline probability matrix with Poisson, applies a Dixon–Coles correction for low scores, and then converts that matrix into the “betting-friendly” views people actually want: 1X2, Double Chance, Draw No Bet, BTTS, and Over/Under lines—plus a heatmap that makes the whole thing instantly readable.

In this post, I’ll walk through your code in detail, but in a way that’s friendly to beginner/intermediate Python users who know pandas and basic stats, and are now leveling up into “real app logic.”

Open the Football Hacking web app and check the Poisson Preds page while you read—seeing the UI and the output tables side-by-side makes everything click faster.

You can also check it on Github.

Why Poisson Works (and Why It’s Not “Perfect”)

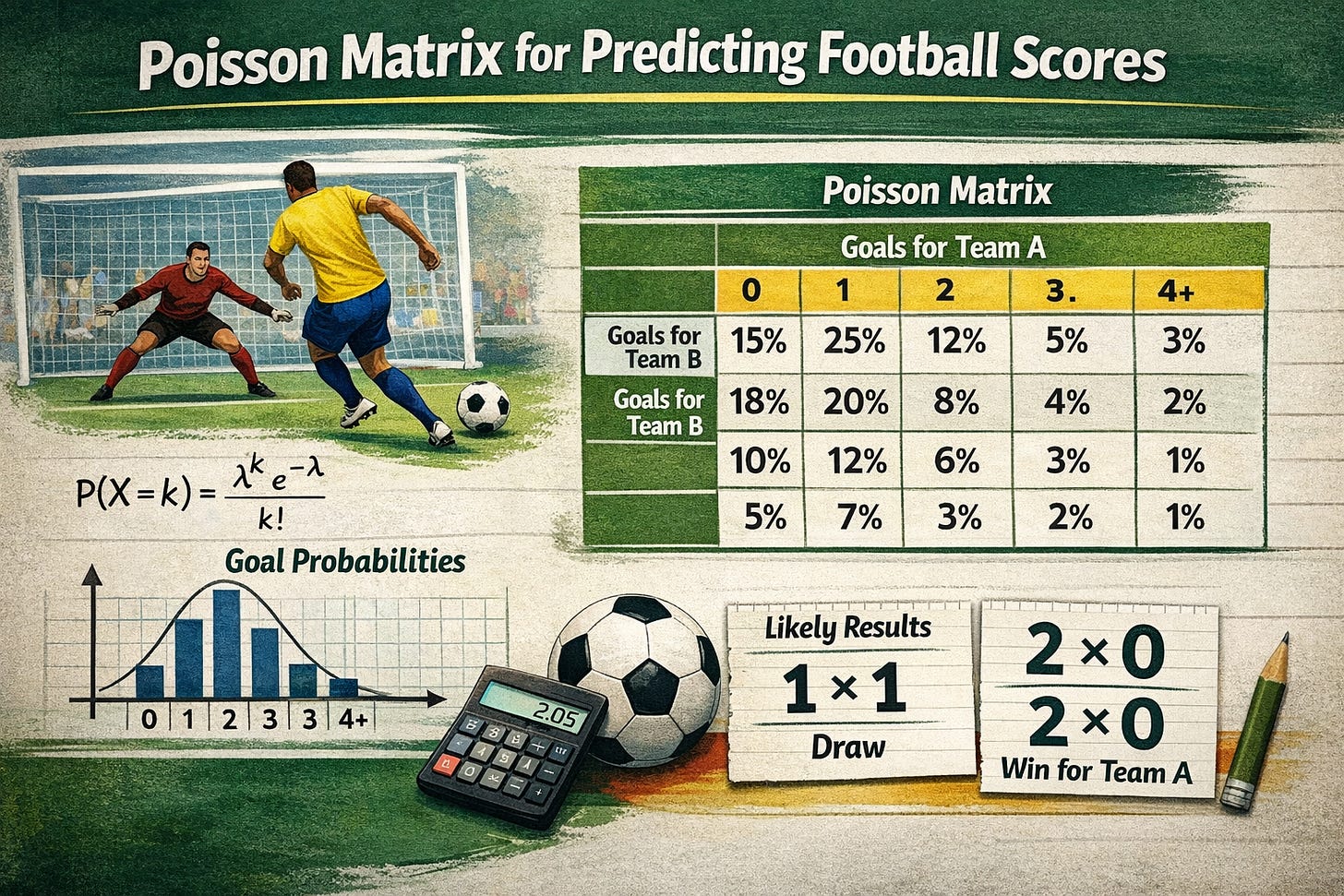

In football modeling, Poisson is popular because it maps nicely from a single parameter—λ (lambda), the expected goals—into a full distribution:

So if you estimate:

λ_home = expected home goals

λ_away = expected away goals

You can compute the probability of every realistic scoreline: 0–0, 1–0, 1–1, 2–1, and so on.

The catch: goals aren’t perfectly independent. Low scorelines (0–0, 1–0, 0–1, 1–1) often behave slightly differently than vanilla Poisson suggests. That’s exactly why your page includes the Dixon–Coles adjustment.

The Big Picture: What This Script Does End-to-End

At a high level, your page logic has six layers:

Pull match stats from MongoDB (FotMob pipeline stored in

db.fotmob_stats)Transform raw stats into a tidy DataFrame (

stats_to_df)Let the user pick a league and choose teams (Streamlit UI + form)

Estimate expected goals from league averages + attack/defense “capacities” (

get_goals_metrics)Create a Poisson score matrix and apply Dixon–Coles (

get_matrix_poisson+apply_dixon_coles_to_matrix)Compute probabilities and fair odds for common markets + show a heatmap and a performance chart

That’s a solid architecture: small, testable functions + a clean UI orchestration section at the bottom.

Imports and Page Setup: Streamlit + Data + Viz + Stats

import streamlit as st

import pandas as pd

import numpy as np

import plotly.express as px

from football_main_app import db

from scipy.stats import poisson

import matplotlib.pyplot as plt

from matplotlib.colors import LinearSegmentedColormap

import seaborn as sns

What matters here

Streamlit (

st) is the UI engine.pandas / numpy handle transformation and numeric work.

dbcomes from your main app module, and is presumably a MongoDB client/connection.scipy.stats.poissonprovidespmfandcdf.matplotlib + seaborn render the “Weighted Performance” chart and the colormap for heatmaps.

You also import Plotly Express (px) but you don’t use it in this script. Not a problem, but you can remove it to keep things clean.

Then you configure the page:

st.set_page_config(

page_title='Poisson Predictions',

layout='wide',

)

Wide layout makes sense here because your UI is very “dashboard-y” (heatmap + chart + multiple tables).

MongoDB Fetch Layer: Cached for 12 Hours

@st.cache_data(show_spinner=False, ttl='12h')

def get_stats(year: int) -> dict:

YEAR = year

stats = list(db.fotmob_stats.aggregate([

{"$match": {"general.season": {"$in": [f"{YEAR}", f"{YEAR-1}/{YEAR}"]}}},

{"$project": {"_id": 0, "general": 1, "teams": 1, "stats": 1, "score": 1, 'result': 1}}

]))

return stats

Why this is smart

@st.cache_dataprevents re-hitting your database every rerun.ttl='12h'is a good tradeoff: the page stays fast, and data refreshes twice a day.Aggregation pipeline does two important things:

$matchlimits by season strings that appear to be formatted either"2026"or"2025/2026"$projectreturns only the fields you need, excluding_id

One small note: the function annotation says it returns dict, but you actually return a list of dicts. If you want to be precise, you could annotate it as list[dict].

Turning Raw Match Docs into a DataFrame

The stats_to_df function is doing the heavy lifting of converting nested MongoDB documents into a flat table.

Key features:

You store league/season/team names

You store team images (nice for future UI upgrades)

You compute a weighted performance feature per match for home and away

You store scoreline and result

weights = np.array([1.12, 1.25, 1.32, 1.50])

This means your weighted performance is not a naive average—it’s a hand-crafted blend where the last metric (xg_op_for_100_passes) is most influential.

You build the values arrays:

values_home = np.array([

stat['stats']['ball_possession']['home'],

stat['stats']['passes_opp_half_%']['home'],

stat['stats']['touch_opp_box_100_passes']['home'],

stat['stats']['xg_op_for_100_passes']['home']

])

weighted_performance_home = np.average(values_home, weights=weights, axis=0)

Same for away.

Why this is a nice touch in a Poisson page

Even though Poisson predictions are about goals, your page also shows “performance context.” That’s huge from a product perspective: you’re not only spitting out odds; you’re helping the user understand why a team might be stronger in that venue context.

At the end you return:

df = pd.DataFrame({

'league': leagues,

'season': seasons,

'home': home,

'home_image': home_images,

'away': away,

'away_image': away_images,

'weighted_performance_home': weighted_performances_home,

'weighted_performance_away': weighted_performances_away,

'score_home': score_home,

'score_away': score_away,

'goals_sum': goals_sum,

'result': results

})

That becomes your “league dataset” driving everything else.

League and Venue Goal Averages

You compute a couple of helpful league-level baselines:

def venue_goals_avg(df, column_home, column_away):

home_goals_avg = df[column_home].mean()

away_goals_avg = df[column_away].mean()

return home_goals_avg, away_goals_avg

This is the home/away venue baseline for the league:

Average home goals

Average away goals

You also compute total goals average:

def get_total_goals_avg(df, column):

return df[column].mean()

These league-level averages are important because they anchor your expected goals estimates (λ). Without them, you’d be guessing in a vacuum.

Estimating Expected Goals for a Match

This function is the core of “where λ comes from”:

def get_goals_metrics(df_league, column_team_home, home_team, column_home_scores,

columns_team_away, away_team, columns_away_scores,

total_home_avg, total_away_avg):

Inside it:

Step 1: compute how much the home team scores at home (within this league dataset)

home_scored_avg = df_league[df_league[column_team_home] == home_team][column_home_scores].mean()

Step 2: compute how much the away team scores away

away_scored_avg = df_league[df_league[columns_team_away] == away_team][columns_away_scores].mean()

Step 3: compute conceding averages (defense)

home_conceced_avg = df_league[df_league[column_team_home] == home_team][columns_away_scores].mean()

away_conceced_avg = df_league[df_league[columns_team_away] == away_team][column_home_scores].mean()

So:

home conceded = goals the opponents scored when this team was home

away conceded = goals the opponents scored when this team was away

Step 4: convert into “capacities” relative to league baseline

home_of_cap = home_scored_avg / total_home_avg

away_of_cap = away_scored_avg / total_away_avg

home_def_cap = home_conceced_avg / total_away_avg

away_def_cap = away_conceced_avg / total_home_avg

Interpretation:

home_of_cap > 1means the home team scores more than league-average home teams (good attack at home)away_def_cap > 1means the away team concedes more than league-average (weak defense in away matches)

Step 5: expected goals are “attack × opponent defense × league baseline”

home_goals = home_of_cap * away_def_cap * total_home_avg

away_goals = away_of_cap * home_def_cap * total_away_avg

This is a classic strength model pattern. It’s simple, explainable, and works surprisingly well as a baseline.

Building the Poisson Scoreline Matrix

def get_matrix_poisson(home_goals, away_goals, max_goals):

home_probs = [poisson.pmf(i, home_goals) for i in range(max_goals)]

away_probs = [poisson.pmf(i, away_goals) for i in range(max_goals)]

goal_matrix = np.outer(home_probs, away_probs)

return goal_matrix

Here’s the important idea:

home_probs[i]is P(home scores i)away_probs[j]is P(away scores j)np.outer(home_probs, away_probs)creates a matrix where each cell is:

You set max_goals=7 later, meaning you model scorelines 0..6 for both teams.

Practical note: This “truncates” probabilities above 6 goals. For typical football λ values (like 0.8 to 2.0), the missing mass is small. If you ever start modeling leagues with higher scoring, you might bump this to 9 or 10.

Dixon–Coles Correction: Fixing Low-Score Dependence

This section is gold, because it handles the biggest “Poisson feels a bit off” issue.

You define the τ adjustment:

def dc_tau(i, j, lam_home, lam_away, rho):

if i == 0 and j == 0:

return 1 - (lam_home * lam_away * rho)

if i == 1 and j == 0:

return 1 + (lam_away * rho)

if i == 0 and j == 1:

return 1 + (lam_home * rho)

if i == 1 and j == 1:

return 1 - rho

return 1.0

Then apply it only to the (0,0), (1,0), (0,1), (1,1) cells:

def apply_dixon_coles_to_matrix(P, lam_home, lam_away, rho, normalize=True):

P_dc = P.astype(float).copy()

for i in (0, 1):

for j in (0, 1):

P_dc[i, j] *= dc_tau(i, j, lam_home, lam_away, rho)

if normalize:

s = P_dc.sum()

P_dc /= s

return P_dc

Why normalize?

Because once you tweak a few cells, the matrix might no longer sum to 1. Normalization brings it back into a valid probability distribution.

Where does rho come from in your code?

def get_rho(goals_avg):

if goals_avg >= 3.0:

rho = -0.02

elif goals_avg <= 2.6:

rho = -0.1

else:

rho = -0.05

return rho

This is a heuristic: lower-scoring leagues get stronger negative rho (more correction effect). It’s a nice practical choice for an app feature—simple and stable.

Converting Matrix → Tables and Markets

Heatmap table (scoreline probabilities)

def matrix_to_df(goal_matrix, home_team, away_team):

max_goals = goal_matrix.shape[0]

goals_table = pd.DataFrame(

goal_matrix,

columns=[f"{away_team} {i}" for i in range(max_goals)],

index=[f"{home_team} {i}" for i in range(max_goals)]

)

goals_table = goals_table * 100

return goals_table

This just formats the matrix into a readable DataFrame and converts to percentage.

1X2 + Double Chance + Draw No Bet

home_win_prob = np.tril(goal_matrix, k=-1).sum() * 100

draw_prob = goal_matrix.diagonal().sum() * 100

away_win_prob = np.triu(goal_matrix, k=1).sum() * 100

np.tril(..., k=-1)= below diagonal → home goals > away goalsdiagonal = draw

np.triu(..., k=1)= above diagonal → away goals > home goals

Then:

Double chance “Home” = home win + draw

Double chance “Away” = away win + draw

“No Draw” = home win + away win

Draw No Bet is derived by conditioning on “a win occurs”:

any_win_double_chance_prob = home_win_prob + away_win_prob

home_handicap_0 = home_win_prob / any_win_double_chance_prob * 100

That’s exactly what DNB is: probability of home winning given the match is not a draw.

Finally, you compute “fair odds”:

'Odd': np.round(100 / home_win_prob, 2)

Because you’re working in percent. (If you were in decimals, it would be 1/prob.)

BTTS: Both Teams To Score

btts_yes = goal_matrix[1:, 1:].sum()

btts_no = 1 - btts_yes

This is a very clean slice:

[1:, 1:]means goals ≥ 1 for both teams.

Then fair odds are 1 / probability.

Over/Under Lines (0.5 to 5.5)

Instead of summing the matrix by total goals, you use a Poisson shortcut:

goals = home_goals + away_goals

under = poisson.cdf(i, goals)

over = 1 - under

This works because the sum of two independent Poisson variables is also Poisson:

So totals are easy and fast.

You loop 0..5, creating O/U 0.5 … 5.5 markets.

Formatting Probabilities into a MultiIndex Table

def probs_to_df(probs):

reform = {(outerKey, innerKey): values

for outerKey, innerDict in probs.items()

for innerKey, values in innerDict.items()}

df = pd.DataFrame.from_dict(reform, orient='index').transpose()

df.columns = pd.MultiIndex.from_tuples(df.columns)

return df.round(2)

This is a neat pattern: it produces a single DataFrame with MultiIndex columns like:

(“Match Odds”, “Home”) → {Prob, Odd}

(“Match Odds”, “Draw”) → {Prob, Odd}

etc.

It’s perfect for a dashboard.

Styling: Highlighting the “ROI Zone” Odds

styled_df = df.style.highlight_between(

color='#5A915A',

left=1.7,

right=2,

axis=1,

subset=pd.IndexSlice[['Odd'], :]

).format(precision=2)

This is a Football Hacking signature detail: it visually emphasizes odds between 1.70 and 2.00 (your “historical ROI sweet spot” narrative).

Also note: you highlight only the Odd rows via subset=pd.IndexSlice[['Odd'], :].

Plotting Weighted Performance Bars (Home vs Away Context)

def plot_venue_performances(df, home_team, away_team):

df_home = df[df['home'] == home_team]

df_away = df[df['away'] == away_team]

fig,ax = plt.subplots(figsize=(8, 5))

ax.set_title('Weighted Performance Home/Away', fontweight='bold')

...

sns.barplot(data=df_home, x='home', y='weighted_performance_home', ax=ax,

color='#213991')

sns.barplot(data=df_away, x='away', y='weighted_performance_away', ax=ax,

color='#915221')

This chart provides narrative support: you’re not just modeling goals—you’re showing performance metrics that correlate with attacking dominance (possession, touches in box per 100 passes, open-play xG per 100 passes, etc.).

The UI Orchestration: Where Everything Comes Together

At the bottom:

Load data:

stats = get_stats(year=2026)

df = stats_to_df(stats=stats)

Select league:

league = st.selectbox(... options=sorted(df['league'].unique()))

df_league = df[df['league'] == league]

Show dataset:

st.dataframe(df_league.drop(columns=['home_image', 'away_image']), hide_index=True)

Select teams with a form:

submitted, home, away = input_form(...)

On submit, compute everything:

total_home_avg, total_away_avg = venue_goals_avg(...)

home_goals, away_goals = get_goals_metrics(...)

rho = get_rho(...)

goal_matrix = get_matrix_poisson(... max_goals=7)

goal_matrix = apply_dixon_coles_to_matrix(...)

Produce tables:

match_probs = get_match_probs(goal_matrix)

btts = get_btts_probs(goal_matrix)

goal_probs = get_unders_overs(home_goals, away_goals)

Render heatmap + chart:

st.dataframe(goals_table.style.background_gradient(...))

plot_venue_performances(...)

Render market tables and warnings:

st.warning('These are the fair odds according to the Poisson distribution...')

That warning is important: it sets expectations and protects users from confusing “fair odds” with bookmaker prices.

Practical Notes and “Next Improvements” (If You Want to Evolve It)

If you ever want to push this page from “strong baseline” to “seriously sharp,” here are upgrade paths that keep your current architecture:

Dynamic

max_goals: set it based on λ (e.g., 7 for low λ, 10 for high λ)Totals from the matrix: for consistency, derive totals by summing matrix diagonals by (i+j), rather than Poisson-sum shortcut—especially after Dixon–Coles adjustment

Team sample size guardrails: if a team has few matches in the selected season, shrink estimates toward the league average (simple Bayesian shrinkage)

Home advantage parameter: explicit multiplier applied to λ_home or to attack/defense capacities

In-play version: update λ based on time remaining and game state (you’ve already explored this idea elsewhere)

Final Takeaway

This page is a great example of what “Football Hacking style” looks like in code:

Clean data pipeline → tidy dataframe

Explainable expected goals estimate

Poisson matrix for full scoreline distribution

Dixon–Coles correction for realism at low scores

Market probabilities + fair odds

UI that teaches while it predicts

That’s not just a prediction tool—it’s a learning tool, which is exactly what makes it Substack-friendly.

If you want to explore Poisson predictions across leagues and matchups (and see the probability heatmap + fair odds instantly), open the Football Hacking web app and head to Poisson Preds. And if you’re reading this on Substack, consider becoming a premium subscriber—I’ll be publishing deeper builds that go beyond baseline Poisson (and show how to make it survive real-world match dynamics).

Brilliant piece.